Enterprise AI Deployment Is Quietly Rewriting Workforce Agreements and Vendor Contracts

For many companies, AI adoption began as a productivity conversation.

A team member used a generative tool to draft proposals. Product teams layered AI into internal workflows. Customer support piloted summarization. Engineers began fine-tuning internal assistants on company knowledge. At first, the legal questions seemed manageable.

Then the outputs started becoming business assets.

That is where the risk now lives.

The Department of Labor’s new partnership with the National Science Foundation on the TechAccess: AI-Ready America initiative makes clear that federal attention is shifting from AI innovation alone toward workforce readiness, governance, and deployment accountability across businesses and public systems. The initiative is explicitly designed to help businesses and workers build practical AI readiness, including adoption support, workforce transition pathways, and implementation infrastructure.

That policy movement matters because enterprise AI is no longer just a tool choice.

It is becoming a contract and compliance architecture issue.

For U.S. businesses, the first legal pressure point is internal workforce use.

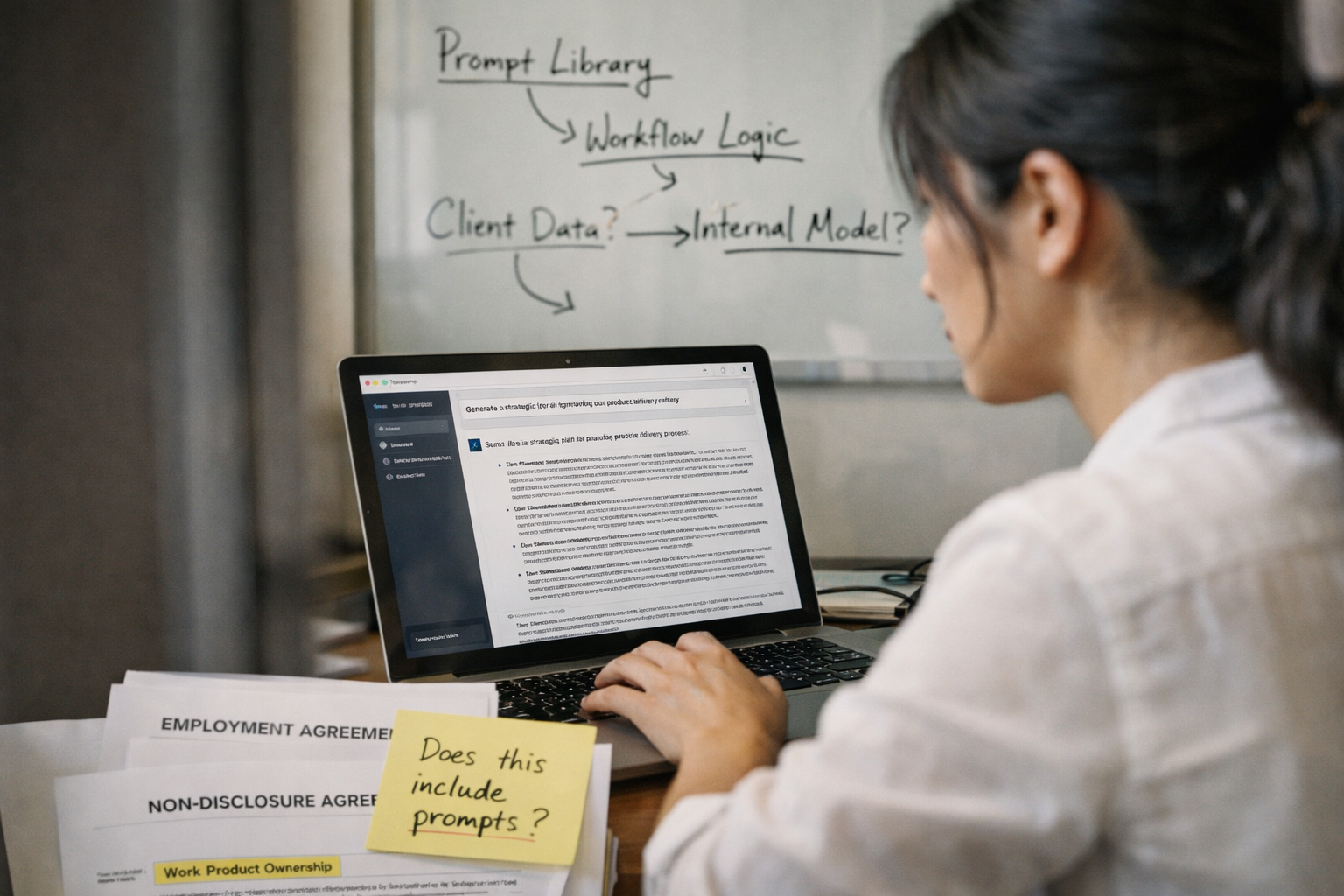

Many employee handbooks and confidentiality agreements were drafted before AI tools became part of ordinary work. As a result, they often say little or nothing about whether employees may use external models, whether prompts may include confidential information, whether AI-generated drafts are considered company work product, or whether employees can later reuse prompt libraries and workflow logic after departure.

Those gaps are becoming expensive.

A prompt sequence built by a senior sales executive may now embody the company’s pricing methodology. A legal operations workflow may include carefully structured prompts that reflect institutional judgment. A product team’s internal fine-tuning data may quietly include trade-secret logic, customer usage insights, or regulated datasets. If employment agreements do not clearly address ownership, businesses may later discover that their most valuable AI-enabled workflows sit in a gray zone between employee know-how and company IP.

The same uncertainty now extends into AI-generated work product ownership.

Companies are increasingly asking whether the business owns only the final output, or whether it also owns the prompts, system instructions, retrieval logic, fine-tuning sets, evaluation benchmarks, and derivative internal models created by employees during ordinary work. That question becomes especially sensitive in AI companies, software businesses, life sciences, and data-heavy manufacturing, where the “workflow around the model” may be more valuable than the model itself.

This is where confidentiality protections are also changing.

Traditional NDAs and employee confidentiality clauses were designed around documents, source code, formulas, and client lists. They were not drafted for a world in which an employee can unintentionally paste highly sensitive operational data into a third-party model interface that may be governed by vendor training rights, retention policies, or shared inference logs. The risk is no longer only disclosure in the traditional sense. It is data migration into an external learning environment.

That is why training data rights and internal model governance are now becoming central contract issues.

As the DOL and NSF’s AI-readiness initiative pushes workforce systems and businesses toward structured adoption, leadership teams need to ensure that governance rules keep pace with the speed of use. A company may allow teams to use enterprise AI tools but fail to define whether uploaded documents can be retained, whether vendor models can learn from prompts, whether derivative internal models belong to the employer, or who bears liability if an employee deploys unapproved AI into a regulated workflow.

The most urgent issue, however, is now appearing in AI vendor MSAs.

This is where some of the most litigated gray zones in enterprise agreements are beginning to form.

Businesses must now clarify—explicitly and early—who owns prompts, outputs, derivative models, embeddings, fine-tuned weights, evaluation data, and compliance liability tied to model misuse or hallucination-driven decisions. A surprising number of vendor agreements still use legacy SaaS language that clearly addresses customer data ownership but says almost nothing about derivative AI artifacts created through use.

That silence is dangerous.

If the MSA does not expressly allocate rights, a customer may assume it owns the outputs while the vendor contract reserves rights in service improvements, training telemetry, prompt optimization, or derivative model enhancements. The same ambiguity can create liability gaps around bias, employment decisions, regulated disclosures, or confidential-data ingestion.

This is why AI deployment can no longer be treated as a procurement issue alone.

Legal, HR, compliance, product, IT, and procurement now need to be reviewing the same ownership and liability chain—from employee use policy through vendor MSA through downstream customer commitments.

The larger business takeaway is that enterprise AI governance is quickly becoming less about whether a company uses AI and more about whether the contracts correctly define ownership, confidentiality, derivative rights, and workforce liability around that use.

The companies that navigate this well are not simply issuing “acceptable use” memos. They are aligning employee agreements, confidentiality protections, AI vendor MSAs, internal governance rules, and work-product ownership language so that the value created by prompts, outputs, and internal models remains clearly inside the enterprise. Federal workforce policy is increasingly signaling that AI adoption and workforce transition are now governance priorities, not just innovation themes.

For founders, boards, and leadership teams deploying AI across operations, this is the right time to review whether your workforce agreements, vendor contracts, and internal governance policies actually define who owns the intelligence being created inside the business. A focused legal review often reveals where prompt ownership, output rights, derivative model claims, confidentiality duties, and compliance liability remain undefined before those gaps become employment disputes, vendor conflicts, or preventable IP litigation. If your company is embedding AI into everyday workflows, this is the right moment to schedule an enterprise AI governance and contract architecture review before operational adoption outruns ownership protection.